Granola Integration

Granola IntegrationTurn meeting data into a productivity system that proves which meetings actually drive outcomes.

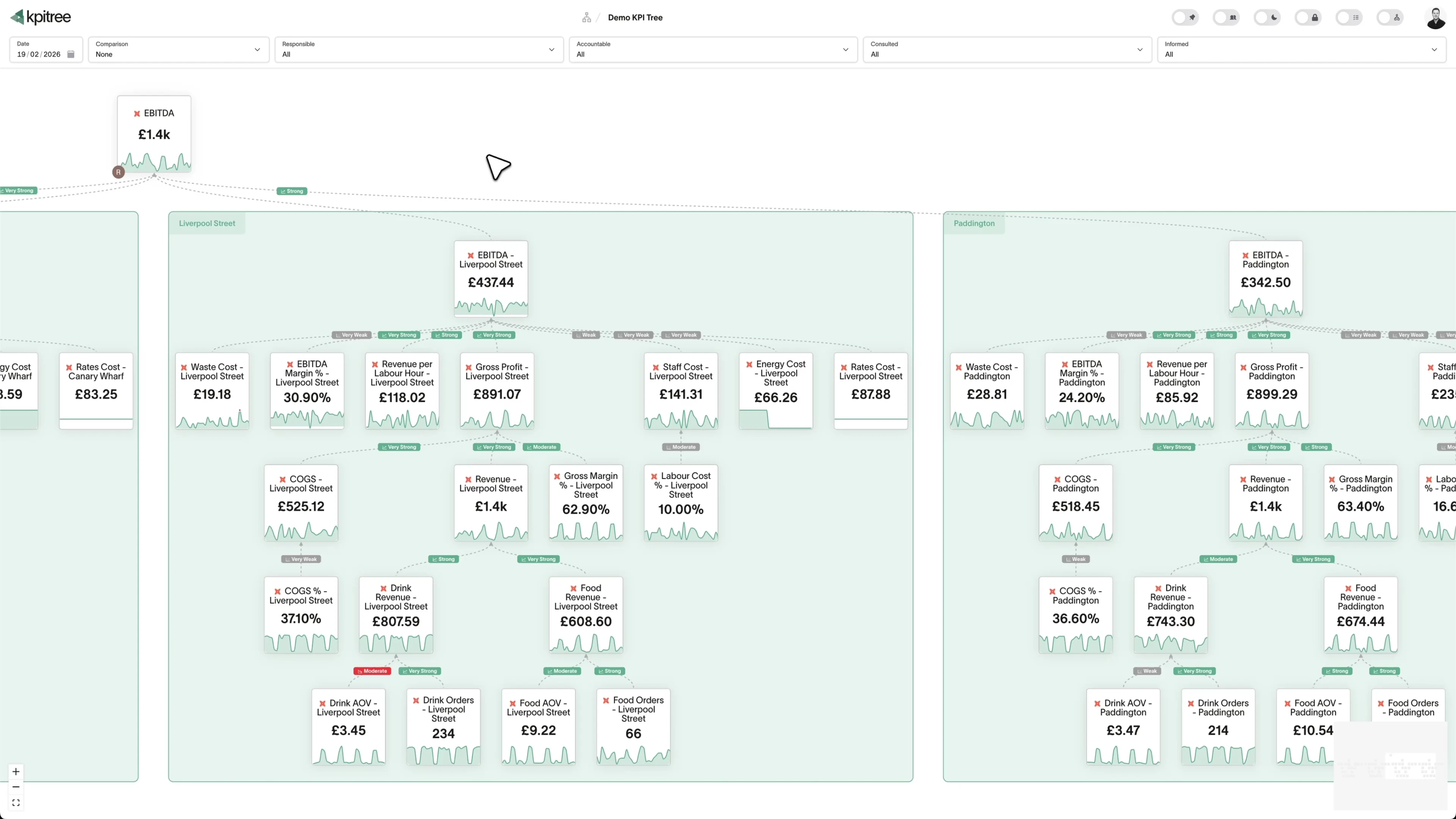

Granola captures meeting transcripts, action items, decisions, and follow-ups with AI-powered accuracy. But meeting data stuck inside a note-taking tool cannot show whether meetings are driving business outcomes or consuming time without impact. KPI Tree connects to your data warehouse where Granola data lands, maps it to metrics like meeting frequency, decision velocity, action item completion rate, and follow-up latency, then structures those metrics into causal trees that tie meeting health to team productivity and revenue. Every organisation suspects some meetings are unnecessary. Now you can prove which ones drive results and which ones do not.

From warehouse to meeting effectiveness tree in under an hour

Granola data flows to your warehouse through API integrations or custom pipelines. KPI Tree connects to the warehouse and turns that data into an owned, structured metric tree.

Connect your Granola data

Two ways to get started, depending on your stack.

Connect your existing warehouse where Granola data already lands.

Our professional services team can build you turn-key AI foundations in a matter of weeks. Data warehouse on Snowflake/BigQuery, ELT with Fivetran, all modelled in dbt with a semantic layer.

Map meeting metrics from your warehouse tables

Define metrics from your Granola data using SQL or the metric builder: meeting frequency, average duration, action items generated per meeting, action item completion rate, decision count, follow-up latency, and more. Each metric supports dimensions like team, meeting type, and participant count.

Build trees and assign ownership

Arrange meeting metrics into causal trees - action item completion drives project velocity, decision throughput drives alignment, meeting load drives available focus time. Assign RACI owners so team leads and chiefs of staff own the meeting health metrics for their scope.

Meeting metrics that connect to the outcomes meetings are supposed to produce

KPI Tree takes the Granola data already in your warehouse and adds the causal structure, ownership, and statistical analysis that turns meeting activity into measurable productivity impact.

Causal trees from meeting activity to business velocity

Meeting frequency and duration are inputs. Decisions made, action items completed, and project velocity are outputs. KPI Tree models the causal chain so leadership sees whether more meetings produce more outcomes - or just more meetings. When velocity drops, trace the cause through meeting health metrics instead of guessing.

Decision velocity as a measurable, owned metric

Granola captures decisions in meeting transcripts. KPI Tree turns decision count, time-to-decision, and decision follow-through into metrics with ownership and statistical monitoring. When decision velocity slows, the alert goes to the right leader with context on which meeting types or teams are the bottleneck.

Action item completion that closes the loop

Meetings generate action items. Granola captures them. KPI Tree tracks completion rate, time-to-completion, and overdue frequency as metrics in a causal tree. When action items pile up, the correlation engine shows the downstream impact on project delivery and team velocity.

Meeting load visible alongside the outcomes it produces.

Every team suspects they have too many meetings. But without data, the conversation stays anecdotal. KPI Tree tracks meeting frequency, duration, and participant hours from Granola data - then connects those metrics to productivity outcomes like project velocity, decision throughput, and focus time. The causal tree makes the trade-off explicit: does reducing meeting load for a team actually improve their output? The answer is statistical, not opinion-based.

- Meeting frequency, duration, and total participant hours as owned metrics

- Causal trees link meeting load to productivity outcomes and focus time

- Dimension breakdowns by team, meeting type, and recurring vs. ad-hoc

- Statistical correlations quantify whether fewer meetings mean more output

Decision velocity tracked from transcript to outcome.

Granola's AI captures decisions made in meetings. KPI Tree tracks how many decisions are made per meeting, how long decisions take from first discussion to resolution, and whether decisions lead to completed actions. Decision velocity becomes a first-class metric with an owner, a trend, and statistical alerts - not an anecdote in a retrospective. Leaders see whether their organisation is getting faster or slower at making and executing decisions.

- Decisions per meeting, time-to-decision, and decision follow-through as metrics

- Trend analysis shows whether decision velocity is improving or degrading

- Correlation engine links decision velocity to project delivery and revenue

- Ownership assigned to chiefs of staff, team leads, or programme managers

Action item completion that predicts project health.

Action items from meetings are the bridge between discussion and execution. When completion rates drop, projects stall. KPI Tree tracks action item generation rate, completion rate, time-to-completion, and overdue frequency from Granola data. These metrics sit in a causal tree alongside project delivery KPIs - so when a project falls behind, the tree shows whether unfinished meeting action items are a contributing factor.

- Action item completion rate, time-to-completion, and overdue frequency tracked

- Causal trees connect action item health to project delivery metrics

- Dimension breakdowns by team, meeting type, and assignee

- Automated alerts when action item backlogs cross statistical thresholds

Meeting culture measured, not debated.

Meeting culture is usually discussed in feelings, not numbers. KPI Tree changes that. Recurring meeting efficiency, participant-to-decision ratios, cross-functional meeting frequency, and meeting-free time per team - all tracked as metrics with trends, owners, and alerts. When an organisation launches a "meeting reset" initiative, the metric tree provides before-and-after measurement. Culture change becomes a managed, measurable programme.

- Recurring meeting efficiency and participant-to-decision ratios as metrics

- Cross-functional meeting frequency shows alignment investment

- Meeting-free focus time tracked as a productivity leading indicator

- Before-and-after measurement for meeting culture initiatives

How KPI Tree uses Granola data differently

Granola excels at capturing meeting content with AI. KPI Tree connects that content to business outcomes through causal structure, statistical analysis, and organisational accountability.

Meeting metrics as part of the productivity tree

Other tools report on meeting activity in isolation. KPI Tree places meeting metrics in causal trees alongside project delivery, team velocity, and revenue outcomes - making the relationship between meetings and results visible and statistical.

Decision velocity as a first-class metric

Most organisations track whether meetings happened, not whether they produced decisions. KPI Tree turns Granola's decision capture into a metric with an owner, a trend, and statistical monitoring - making decision throughput measurable and improvable.

Accountability for meeting culture

Team leads own their team's meeting metrics. Chiefs of staff own organisational meeting health. The metric tree makes meeting culture an owned, measured outcome - not an abstract complaint.

Metrics you can track

25 Granola metrics ready to add to your metric trees.

Action Item Completion Rate

Action Item Completion Rate

Meeting IntelligenceMetric Definition

Completion Rate = Completed Action Items / Total Action Items Assigned × 100

Action Item Completion Rate measures the percentage of action items captured during Granola-recorded meetings that are completed within their assigned timeframe. It is the most direct measure of whether meetings produce results or merely consume time.

Action Item Distribution Balance

Action Item Distribution Balance

Meeting IntelligenceMetric Definition

Action Item Distribution Balance measures how evenly meeting action items are distributed across team members. It identifies individuals who consistently receive a disproportionate share of action items, creating bottleneck and burnout risk, versus those who rarely take on commitments.

Action Item Velocity

Action Item Velocity

Meeting IntelligenceMetric Definition

Action Item Velocity = Average(Completion Timestamp − Assignment Timestamp)

Action Item Velocity measures the average time from when an action item is assigned in a Granola-recorded meeting to when it is completed. It reflects the organisation's ability to translate meeting decisions into executed work and is a leading indicator of operational agility.

Conversation Topic Analysis

Conversation Topic Analysis

Meeting IntelligenceMetric Definition

Conversation Topic Analysis uses Granola's meeting transcripts to identify and categorise the topics discussed in meetings, measuring how much time is allocated to each. It reveals whether meeting time is spent on strategic priorities or consumed by recurring operational issues.

Cross-Team Collaboration Rate

Cross-Team Collaboration Rate

Meeting IntelligenceMetric Definition

Cross-Team Rate = Meetings with Multi-Team Participants / Total Meetings × 100

Cross-Team Collaboration Rate measures the percentage of meetings that include participants from two or more different teams or departments. It serves as a proxy for organisational connectivity and the degree to which teams are breaking down silos through direct interaction.

Decision Velocity Tracking

Decision Velocity Tracking

Meeting IntelligenceMetric Definition

Decision Velocity Tracking measures how quickly decisions are reached within and across meetings, from initial discussion to final commitment. It identifies topics that are decided efficiently versus those that cycle through multiple meetings without resolution, consuming time and delaying progress.

Knowledge Transfer Effectiveness

Knowledge Transfer Effectiveness

Meeting IntelligenceMetric Definition

Knowledge Transfer Effectiveness measures how successfully meetings disseminate information to participants and non-participants alike. It evaluates note quality, transcript sharing rates, follow-up documentation, and whether meeting outcomes reach everyone who needs them - not just those who attended.

Meeting Attendance Rate

Meeting Attendance Rate

Meeting IntelligenceMetric Definition

Attendance Rate = Actual Attendees / Invited Participants × 100

Meeting Attendance Rate measures the percentage of invited participants who actually attend a meeting. Low attendance suggests that meetings are perceived as low-value, poorly timed, or include too broad an invite list. High attendance indicates relevance and effective scheduling.

Meeting Cadence Optimisation

Meeting Cadence Optimisation

Meeting IntelligenceMetric Definition

Meeting Cadence Optimisation evaluates whether the frequency of recurring meetings matches actual communication needs. It analyses patterns such as short meetings that could be async, cancelled occurrences that suggest over-scheduling, and meeting clusters that fragment focus time.

Meeting Conflict Resolution Rate

Meeting Conflict Resolution Rate

Meeting IntelligenceMetric Definition

Meeting Conflict Resolution Rate measures the percentage of discussions involving disagreement or conflicting viewpoints that reach a constructive resolution within the meeting. It distinguishes between productive debate that drives better decisions and unresolved conflict that festers and delays progress.

Meeting Cost per Outcome

Meeting Cost per Outcome

Meeting IntelligenceMetric Definition

Cost per Outcome = (Sum of Participant Hourly Rates × Meeting Duration) / Number of Outcomes

Meeting Cost per Outcome calculates the economic cost of each tangible meeting output - decisions made, action items assigned, or problems resolved - by dividing the total cost of participant time by the number of outcomes produced. It transforms abstract meeting complaints into concrete financial data.

Meeting Duration Analysis

Meeting Duration Analysis

Meeting IntelligenceMetric Definition

Meeting Duration Analysis compares actual meeting duration (as recorded by Granola) to scheduled duration, and tracks duration trends across meeting types. It identifies meetings that consistently overrun, those that end early (potentially unnecessarily scheduled), and opportunities to right-size meeting blocks.

Meeting Follow-Up Rate

Meeting Follow-Up Rate

Meeting IntelligenceMetric Definition

Follow-Up Rate = Meetings with Post-Meeting Actions / Total Meetings × 100

Meeting Follow-Up Rate measures the percentage of meetings followed by post-meeting actions such as sharing notes, distributing transcripts, updating tasks in project management tools, or sending summary communications. It indicates whether meetings produce persistent outputs or evaporate after the call ends.

Meeting Frequency Rate

Meeting Frequency Rate

Meeting IntelligenceMetric Definition

Meeting Frequency Rate = Total Meetings / Time Period

Meeting Frequency Rate measures the number of meetings per person, team, or organisation within a given period. It provides visibility into meeting load and its impact on available focus time. High frequency rates correlate with reduced productivity, particularly for roles that require deep, uninterrupted work.

Meeting Outcome Effectiveness

Meeting Outcome Effectiveness

Meeting IntelligenceMetric Definition

Outcome Effectiveness = Meetings with Tangible Outcomes / Total Meetings × 100

Meeting Outcome Effectiveness measures the proportion of meetings that produce at least one tangible outcome - a decision made, an action item assigned, a problem resolved, or a plan agreed upon. It separates productive meetings from informational sessions and status updates that could be asynchronous.

Meeting Preparation Score

Meeting Preparation Score

Meeting IntelligenceMetric Definition

Meeting Preparation Score evaluates how well-prepared meetings are by assessing factors such as agenda availability, pre-read distribution, supporting material quality, and participant readiness. Well-prepared meetings are consistently more productive, shorter, and better received than ad hoc gatherings.

Meeting ROI Analysis

Meeting ROI Analysis

Meeting IntelligenceMetric Definition

Meeting ROI Analysis quantifies the return on investment of meetings by comparing the value of outcomes produced - decisions enabling revenue, problems resolved, projects unblocked - against the opportunity cost of participant time. It treats meetings as an investment that should produce measurable returns.

Meeting Sentiment Analysis

Meeting Sentiment Analysis

Meeting IntelligenceMetric Definition

Meeting Sentiment Analysis applies natural language processing to Granola meeting transcripts to classify the overall emotional tone - positive, neutral, or negative - and track sentiment shifts during the meeting. It provides an objective measure of meeting atmosphere that complements subjective feedback.

Meeting Tag Frequency Analysis

Meeting Tag Frequency Analysis

Meeting IntelligenceMetric Definition

Meeting Tag Frequency Analysis examines how tags and labels are applied to meetings in Granola, measuring tag usage patterns, consistency, and coverage. Effective tagging enables portfolio-level meeting analysis - understanding how time is allocated across strategic themes, project types, and meeting purposes.

Note Quality Score

Note Quality Score

Meeting IntelligenceMetric Definition

Note Quality Score evaluates the completeness and usefulness of Granola's AI-generated meeting notes by assessing factors such as action item capture rate, decision documentation, key discussion point coverage, and participant attribution accuracy. High-quality notes serve as reliable records of what was discussed and decided.

Participant Engagement Score

Participant Engagement Score

Meeting IntelligenceMetric Definition

Participant Engagement Score measures how actively each meeting participant contributes, considering factors such as speaking time, questions asked, ideas contributed, and interaction with other participants. It identifies silent attendees who may not need to be present and highlights facilitation opportunities to improve inclusivity.

Participant Network Analysis

Participant Network Analysis

Meeting IntelligenceMetric Definition

Participant Network Analysis maps the connections between individuals based on shared meeting attendance, creating a network graph that reveals who collaborates with whom, identifies key connectors who bridge different groups, and surfaces isolated clusters with limited cross-pollination.

Participant Speaking Time Distribution

Participant Speaking Time Distribution

Meeting IntelligenceMetric Definition

Participant Speaking Time Distribution measures how meeting speaking time is allocated across participants. It quantifies the Gini coefficient of speaking time, identifying meetings dominated by one or two voices versus those with balanced participation. Balanced distribution correlates with higher-quality decisions and better participant satisfaction.

Recurring Meeting Efficiency Trends

Recurring Meeting Efficiency Trends

Meeting IntelligenceMetric Definition

Recurring Meeting Efficiency Trends tracks how the productivity of recurring meetings changes over time, measuring outcome production, duration adherence, attendance stability, and participant engagement across successive occurrences. It reveals whether recurring meetings are improving, stagnating, or degrading.

Transcript Keyword Trending

Transcript Keyword Trending

Meeting IntelligenceMetric Definition

Transcript Keyword Trending analyses the frequency and trajectory of specific keywords and phrases across Granola meeting transcripts over time. It surfaces emerging topics, shifting priorities, and recurring concerns that might not be visible in individual meetings but become apparent in aggregate.

Related integrations

Other data sources that work with KPI Tree.

Common questions

- Through the warehouse. Granola ships an early-access MCP server on Business and Enterprise plans, but the server is admin-gated and off by default, so the production connection today is warehouse-first. Teams that already export Granola meeting metadata, transcripts, action items, and decisions to Snowflake, BigQuery, or another warehouse via the Granola API point KPI Tree at the warehouse and read those tables in place. Teams that do not yet have that pipeline engage our professional services team, which stands up the warehouse, configures the ELT against the Granola API, and ships a dbt model for meeting effectiveness as a fixed-scope engagement.

- Any metric derivable from your Granola warehouse tables: meeting frequency, average duration, participant hours, decision count, decisions per meeting, action item generation rate, action item completion rate, time-to-completion, follow-up latency, and more. Metrics support dimensions like team, meeting type, and participant count.

- No. KPI Tree reads aggregate metrics from your data warehouse - not raw transcripts. It sees structured metadata like meeting counts, durations, action item statuses, and decision counts, not the content of conversations.

- If your Granola data is already in a warehouse, connecting KPI Tree takes under an hour and most teams have a working meeting effectiveness tree within a single session. If you need the AI foundations built from scratch, our professional services team handles the setup end to end, typically a few weeks, and delivers a warehouse, an ELT pipeline against the Granola API, and dbt models for meeting effectiveness metrics.

- Yes - this is the core value. Meeting metrics from Granola sit in the same causal tree as project delivery metrics from Jira, Linear, or Asana. The correlation engine statistically measures whether meeting patterns predict project outcomes.

- Especially so. Remote and hybrid teams rely more heavily on meetings as their primary collaboration mechanism. Tracking meeting effectiveness, decision velocity, and action item follow-through provides visibility that co-located teams get informally but distributed teams often lack.

- It varies by organisation. Common patterns: team leads own their team's meeting metrics, chiefs of staff own organisational meeting health, and programme managers own cross-functional meeting effectiveness. KPI Tree's RACI model supports any ownership structure.

- Yes. KPI Tree's comparison period feature lets you measure meeting metrics before and after any intervention. Track whether reducing recurring meetings actually improved focus time, decision velocity, or project delivery - with statistical confidence, not anecdotal claims.

Related guides

Deep dives into the frameworks and metrics that work with Granola.

Your meetings generate data. Find out which ones generate results.

Connect your warehouse to KPI Tree and turn Granola meeting data into a productivity system with causal metric trees, decision velocity tracking, and statistical proof of which meetings drive outcomes.