Intercom Integration

Intercom IntegrationTurn Intercom conversations into a support performance system that drives retention.

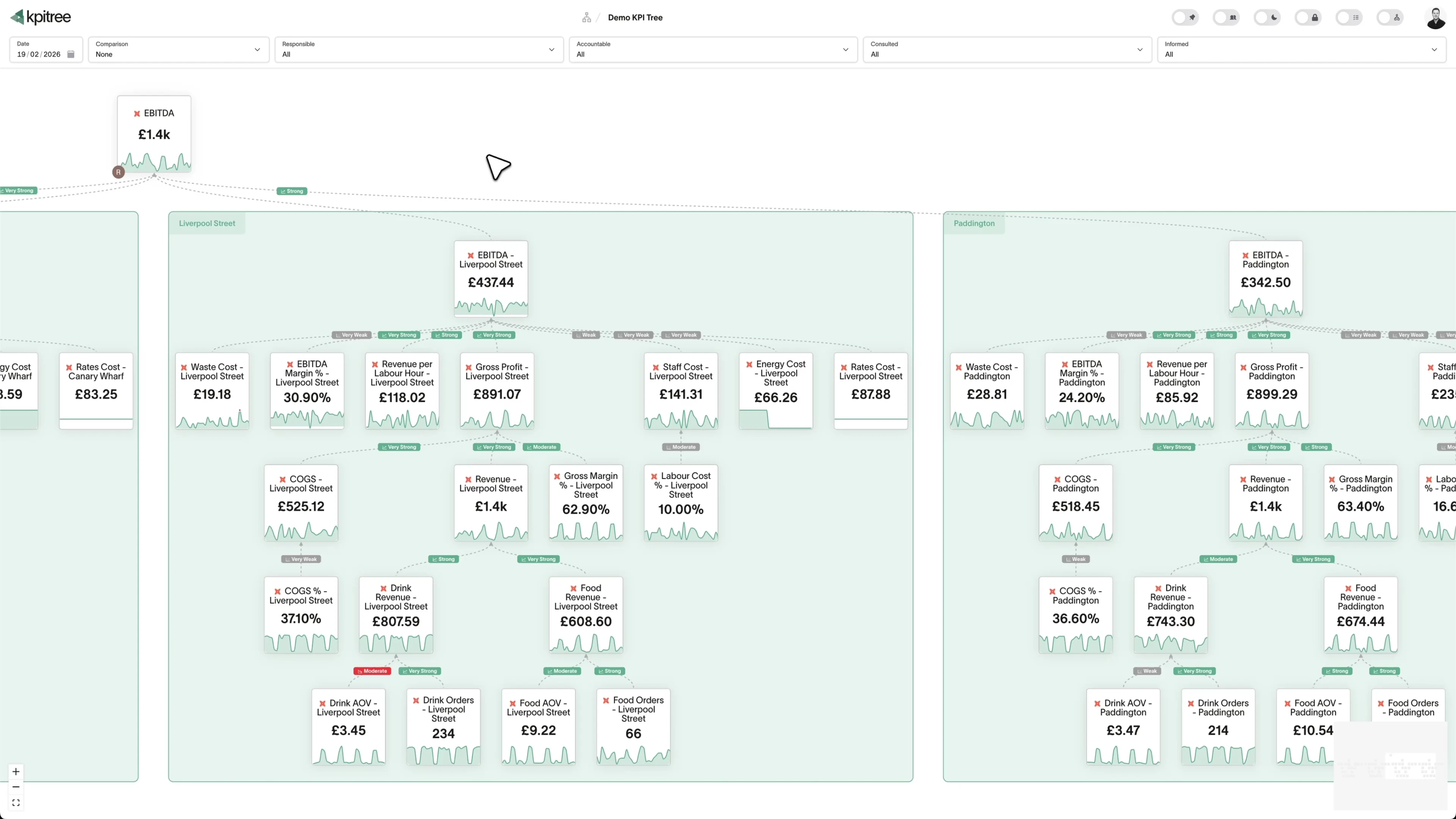

Intercom captures every customer conversation, bot interaction, help centre visit, and satisfaction rating. But support metrics stuck inside Intercom cannot show their impact on churn, expansion, or product adoption. KPI Tree consumes Intercom data through Intercom's official MCP server at `mcp.intercom.com` (currently supported for US-hosted Intercom workspaces), through your warehouse where Intercom data already lands via Fivetran or Airbyte, or through a professional services engagement that builds the stack for you. Once connected, KPI Tree maps it to metrics like first response time, resolution rate, CSAT, and bot deflection, then structures those metrics into causal trees that connect support performance directly to retention and revenue. Support stops being a cost centre in a spreadsheet and becomes a growth lever with clear ownership.

From Intercom events to owned support trees

KPI Tree consumes Intercom through Intercom's official MCP server (US workspaces), through your warehouse where Intercom data already lands, or through a professional services engagement.

Connect your Intercom data

Three ways to get started, depending on your stack.

Pull metrics from Intercom directly through the Model Context Protocol.

Connect your existing warehouse where Intercom data already lands.

Our professional services team can build you turn-key AI foundations in a matter of weeks. Data warehouse on Snowflake/BigQuery, ELT with Fivetran, all modelled in dbt with a semantic layer.

Map Intercom metrics from your warehouse tables

Define metrics from your Intercom data using SQL or the metric builder: first response time, resolution time, CSAT score, conversation volume, bot deflection rate, help centre article views, and more. Each metric supports dimensions like team, tag, topic, and channel.

Build trees and assign ownership

Arrange Intercom metrics into causal trees - bot deflection drives conversation volume, which drives resolution time, which drives CSAT, which drives retention. Assign RACI owners to every metric so the right support lead is accountable when any metric moves.

Support metrics that prove their impact on the business

KPI Tree takes the Intercom data already in your warehouse and adds the causal structure, ownership, and statistical analysis that connects support performance to business outcomes.

Causal trees from conversation to retention

First response time drives CSAT. CSAT drives NPS. NPS drives retention. KPI Tree models these relationships as a tree so every support leader sees exactly how their metrics connect to the revenue metrics the board cares about. When CSAT drops, trace the cause through the tree instead of running ad-hoc queries.

Statistical analysis across support and product metrics

Pearson correlations and Granger causality tests reveal whether faster resolution times actually correlate with lower churn, or whether bot deflection improvements drive CSAT gains. Replace anecdotal arguments with statistical evidence that support investment pays off.

Alerts that reach the right person with the right context

When conversation volume spikes or CSAT drops below statistical norms, KPI Tree alerts the metric owner via Slack, email, or SMS - with context on what caused the change and which downstream metrics are at risk. No more monitoring dashboards manually.

Support metrics that connect upward to revenue.

Conversation volume, first response time, resolution time, and CSAT are not isolated numbers - they are nodes in a tree that leads to retention, expansion, and revenue. KPI Tree makes those connections explicit and statistical. When the VP of Support needs to justify headcount, the tree shows exactly how support capacity drives response time, which drives CSAT, which drives net revenue retention. The argument is structural, not anecdotal.

- Conversation volume, response time, resolution time, and CSAT as tree nodes

- Causal relationships connect support metrics to retention and revenue

- Statistical correlations quantify the impact of support improvements

- Executive stakeholders see support impact without learning Intercom

Bot deflection and self-service measured by business impact.

Intercom Fin and your help centre exist to deflect conversations. But deflection rate alone does not tell you whether self-service is working - what matters is whether it reduces agent load without hurting satisfaction. KPI Tree tracks deflection rate alongside CSAT, re-contact rate, and resolution time in a causal tree. If deflection increases but CSAT drops, the tree surfaces the trade-off before it becomes a retention problem.

- Bot deflection rate, help centre views, and self-service resolution as metrics

- Causal trees link deflection to CSAT, re-contact rate, and agent workload

- Dimension breakdowns by topic, tag, and channel isolate where self-service works

- Automated alerts when deflection and satisfaction diverge

Team performance visible without building custom reports.

Intercom reports show you what happened. KPI Tree shows you why it happened and who is responsible. Metrics break down by team, individual agent, topic, and channel - each dimension generating child metrics with their own owners. A team lead sees their team's resolution time trending up, traces it to a specific topic via the correlation engine, and assigns an action - all without leaving the metric tree.

- Dimension breakdowns by team, agent, topic, tag, and channel

- RACI ownership at every level - team leads own team metrics, agents own theirs

- Correlation engine links team performance to topic and channel patterns

- Actions tracked against specific metrics with impact verified after the fact

Support capacity planning backed by statistical trends.

Conversation volume patterns, seasonal spikes, and trend shifts - KPI Tree's statistical engine detects them automatically and surfaces them to capacity planners. Instead of reacting to volume surges after they happen, support leaders see trend changes as they emerge and plan staffing accordingly. The causal tree shows how volume drives response time, which drives CSAT - making the cost of understaffing explicit and quantifiable.

- Automated outlier detection identifies volume spikes and trend shifts

- Causal trees quantify the downstream impact of volume changes on CSAT

- Historical comparison periods show seasonal patterns automatically

- Staffing decisions backed by statistical trends, not gut feel

How KPI Tree uses Intercom data differently

Intercom's built-in reporting shows support activity. KPI Tree connects that activity to business outcomes through causal structure, statistical analysis, and cross-functional ownership.

Causal trees, not activity reports

Intercom shows conversation counts and resolution times. KPI Tree arranges those metrics in causal trees that connect to retention and revenue - making support's business impact visible and accountable.

Statistical proof that support drives retention

Correlations and causality tests between support metrics and business KPIs replace anecdotal claims with evidence. Prove that faster resolution reduces churn, or that bot improvements drive CSAT gains.

Cross-functional accountability, not siloed dashboards

Support metrics connect to product, success, and revenue metrics in a single tree. When support quality degrades, the impact on retention is visible to everyone - not just the support team.

Metrics you can track

25 Intercom metrics ready to add to your metric trees.

Agent Performance Analysis

Agent Performance Analysis

Customer SupportMetric Definition

Agent Performance Analysis evaluates individual support agents across multiple dimensions including response time, resolution rate, customer satisfaction scores, and conversation volume handled. It provides a balanced scorecard that avoids over-indexing on speed at the expense of quality.

Agent Utilisation Rate

Agent Utilisation Rate

Customer SupportMetric Definition

Agent Utilisation Rate = Active Handling Time / Total Available Time × 100

Agent Utilisation Rate measures the percentage of an agent's available time spent actively handling conversations versus idle or performing administrative tasks. It helps balance workload to prevent both burnout from over-utilisation and waste from under-utilisation.

Article Effectiveness Score

Article Effectiveness Score

Customer SupportMetric Definition

Article Effectiveness Score measures how successfully help centre articles resolve customer queries without requiring further human support. It considers article views, positive and negative reactions, and whether customers who view an article subsequently open a conversation. Effective articles deflect tickets and empower self-service.

Company Support Trends

Company Support Trends

Customer SupportMetric Definition

Company Support Trends analyses conversation volume, topic distribution, and satisfaction scores at the company or account level over time. It identifies accounts with escalating support needs that may signal churn risk, as well as accounts whose declining support needs may indicate successful adoption.

Conversation Abandonment Rate

Conversation Abandonment Rate

Customer SupportMetric Definition

Abandonment Rate = Abandoned Conversations / Total Conversations × 100

Conversation Abandonment Rate measures the percentage of support conversations where the customer stops responding before the issue is resolved. It indicates friction, frustration, or perceived futility in the support experience. High abandonment often correlates with long wait times or unhelpful initial responses.

Conversation Channel Analysis

Conversation Channel Analysis

Customer SupportMetric Definition

Conversation Channel Analysis breaks down support metrics by communication channel - in-app chat, email, social media, and phone - to compare volume, performance, and customer satisfaction across channels. It informs channel investment decisions and identifies where customers prefer to seek help.

Conversation Funnel Analysis

Conversation Funnel Analysis

Customer SupportMetric Definition

Conversation Funnel Analysis maps the stages a support conversation passes through - from initiation through triage, assignment, response, and resolution - and measures conversion rates between each stage. It identifies where conversations stall, get lost, or are abandoned.

Conversation Resolution Rate

Conversation Resolution Rate

Customer SupportMetric Definition

Resolution Rate = Conversations Resolved (Not Re-opened) / Total Conversations × 100

Conversation Resolution Rate measures the percentage of support conversations that are closed and remain closed - without being re-opened by the customer within a defined window. It distinguishes genuine resolutions from premature closures that leave the customer's issue unaddressed.

Conversation Volume

Conversation Volume

Customer SupportMetric Definition

Conversation Volume = Count of New Conversations in Period

Conversation Volume measures the total number of new support conversations initiated within a given period. It is the foundational capacity metric for support operations, driving staffing decisions, budget planning, and automation investment. Sudden volume changes often correlate with product releases, incidents, or seasonal patterns.

Customer Effort Score

Customer Effort Score

Customer SupportMetric Definition

CES = Sum of Effort Ratings / Total Responses

Customer Effort Score (CES) measures how much effort a customer must expend to get their issue resolved through Intercom. It is typically captured via a post-conversation survey asking customers to rate the ease of their experience. Lower effort strongly correlates with higher retention and loyalty.

Customer Journey Support Analysis

Customer Journey Support Analysis

Customer SupportMetric Definition

Customer Journey Support Analysis maps support conversation volume and topics to specific stages of the customer lifecycle - onboarding, activation, adoption, renewal, and expansion. It reveals which journey stages generate the most support friction and where product improvements could reduce support demand.

Customer Satisfaction Score

Customer Satisfaction Score

Customer SupportMetric Definition

CSAT = Positive Ratings / Total Ratings × 100

Customer Satisfaction Score (CSAT) measures the percentage of customers who rate their support experience positively after an Intercom conversation. It is the most widely used indicator of support quality and directly reflects whether agents are meeting customer expectations.

Customer Segment Support Analysis

Customer Segment Support Analysis

Customer SupportMetric Definition

Customer Segment Support Analysis evaluates support metrics - volume, resolution time, CSAT, and topic distribution - across customer segments defined by plan tier, company size, industry, or cohort. It reveals whether high-value segments receive appropriate service levels and where segment-specific issues exist.

First Response Time

First Response Time

Customer SupportMetric Definition

First Response Time = First Agent Reply Timestamp − Conversation Created Timestamp

First Response Time (FRT) measures the elapsed time from when a customer initiates a conversation to when they receive the first human reply from a support agent. It is one of the most impactful support metrics because speed of initial acknowledgement strongly influences customer perception of the entire interaction.

Help Centre Article Views

Help Centre Article Views

Customer SupportMetric Definition

Article Views = Count of Page Views per Article in Period

Help Centre Article Views measures the number of times each article in your Intercom help centre is viewed by customers. It reveals which topics customers most frequently seek help with and which articles are discoverable. Pairing view data with effectiveness scores identifies high-traffic articles that need improvement.

Message Sentiment Analysis

Message Sentiment Analysis

Customer SupportMetric Definition

Message Sentiment Analysis applies natural language processing to Intercom conversation messages to classify customer sentiment as positive, neutral, or negative. It tracks sentiment trends over time and across segments, providing an objective measure of customer emotion that complements survey-based metrics like CSAT.

Peak Support Hours Analysis

Peak Support Hours Analysis

Customer SupportMetric Definition

Peak Support Hours Analysis identifies the times of day, days of the week, and seasonal periods when support conversation volume is highest. It provides the demand signal needed for optimal agent scheduling and helps set customer expectations about response times during different periods.

Proactive Support Effectiveness

Proactive Support Effectiveness

Customer SupportMetric Definition

Proactive Support Effectiveness measures the impact of outbound messages, product tours, tooltips, and banners on reducing inbound support volume. It compares support conversation rates between customers who received proactive communications and those who did not, quantifying the deflection value of proactive support.

Repeat Contact Rate

Repeat Contact Rate

Customer SupportMetric Definition

Repeat Contact Rate = Customers with Repeat Contacts / Total Customers Contacting Support × 100

Repeat Contact Rate measures the percentage of customers who contact support about the same or a closely related issue within a defined window after their initial conversation was closed. It reveals incomplete resolutions, workarounds masquerading as fixes, and systemic product issues that generate recurring support demand.

Average Resolution Time

Average Resolution Time

Customer SupportMetric Definition

Resolution Time = Conversation Resolved Timestamp − Conversation Created Timestamp

Resolution Time measures the total elapsed time from when a customer opens a conversation in Intercom to when it is marked as resolved. It encompasses first response time, back-and-forth exchanges, internal investigation, and any waiting periods. It is a primary indicator of support efficiency and customer experience.

Self-Service Success Rate

Self-Service Success Rate

Customer SupportMetric Definition

Self-Service Success Rate = Self-Service Resolutions / Total Support Interactions × 100

Self-Service Success Rate measures the percentage of customers who find a resolution through help centre articles, chatbot flows, or product tours without needing to speak to a human agent. It is the ultimate measure of whether self-service investment is paying off and is a key driver of support scalability.

Support Cost per Conversation

Support Cost per Conversation

Customer SupportMetric Definition

Cost per Conversation = Total Support Costs / Total Conversations Handled

Support Cost per Conversation calculates the fully loaded cost of handling a single support conversation, including agent compensation, tooling costs, management overhead, and infrastructure. It provides the economic foundation for automation ROI calculations and staffing decisions.

Support Ticket Escalation Rate

Support Ticket Escalation Rate

Customer SupportMetric Definition

Escalation Rate = Escalated Conversations / Total Conversations × 100

Support Ticket Escalation Rate measures the percentage of conversations that require escalation from first-line agents to senior specialists, managers, or other departments. High escalation rates indicate gaps in first-tier training, documentation, or tooling that prevent frontline resolution.

Tag Usage Analysis

Tag Usage Analysis

Customer SupportMetric Definition

Tag Usage Analysis examines how conversation tags are applied across Intercom, measuring tag frequency, consistency, and coverage. It ensures that the tagging taxonomy remains relevant and that agents apply tags consistently, which is essential for reliable topic-level reporting and routing.

Team Workload Distribution

Team Workload Distribution

Customer SupportMetric Definition

Team Workload Distribution measures how conversations are distributed across teams and individual agents within Intercom. It highlights imbalances where some agents are overloaded while others are under-utilised, enabling fairer distribution and more sustainable working conditions.

Related integrations

Other data sources that work with KPI Tree.

Common questions

- Through Intercom's official MCP server at `mcp.intercom.com`, which exposes conversations, contacts, and tickets over OAuth. **Currently supported for US-hosted Intercom workspaces only**, so EU and AU data-residency customers should use the warehouse path and replicate Intercom to Snowflake, BigQuery, or another warehouse via Fivetran, Airbyte, or a custom job. Teams without a warehouse engage our professional services team, which stands up the pipeline and ships a dbt semantic layer for first response time, resolution time, CSAT, and bot deflection.

- Any metric derivable from your Intercom warehouse tables: first response time, resolution time, CSAT score, conversation volume, bot deflection rate, help centre article views, re-contact rate, tag distribution, and more. Metrics support dimensions like team, agent, topic, tag, and channel.

- Yes. Fin deflection rate, resolution rate, handoff rate, and customer satisfaction after bot interactions can all be defined as metrics. KPI Tree adds what Intercom's Fin reporting lacks: causal relationships showing whether bot improvements actually drive CSAT and reduce agent load.

- The MCP connection takes minutes - no warehouse required. If your Intercom data is already in a warehouse, connecting KPI Tree takes under an hour. If you need AI foundations built from scratch, our professional services team handles the setup end to end. Most teams have a working support metric tree within a single session.

- No. Intercom's reporting is excellent for day-to-day support operations - queue management, agent performance, conversation routing. KPI Tree solves a different problem: connecting support metrics to business outcomes with causal structure and cross-functional ownership.

- Yes - this is exactly what KPI Tree is designed for. Support metrics from Intercom sit in the same tree as retention, expansion, and revenue metrics from Stripe, Chargebee, or your CRM. The causal tree makes the connection explicit and statistical.

- No. KPI Tree reads aggregate metrics from your data warehouse - not conversation transcripts. It sees metadata like timestamps, tags, resolution status, and satisfaction scores, not the content of customer conversations.

- Yes. Metrics support dimensions like team, agent, topic, tag, channel, and priority. Dimension breakdowns auto-generate child metrics - "Resolution Time (Team: EMEA)", "CSAT (Topic: Billing)" - each with its own owner and alerts.

Related guides

Deep dives into the frameworks and metrics that work with Intercom.

Your Intercom data proves support drives growth. Make the case undeniable.

Connect your warehouse to KPI Tree and turn Intercom conversations into a support performance system with causal metric trees, RACI ownership, and statistical proof that support quality drives retention.