GitHub Integration

GitHub IntegrationTurn GitHub activity into an engineering performance system your whole organisation can read.

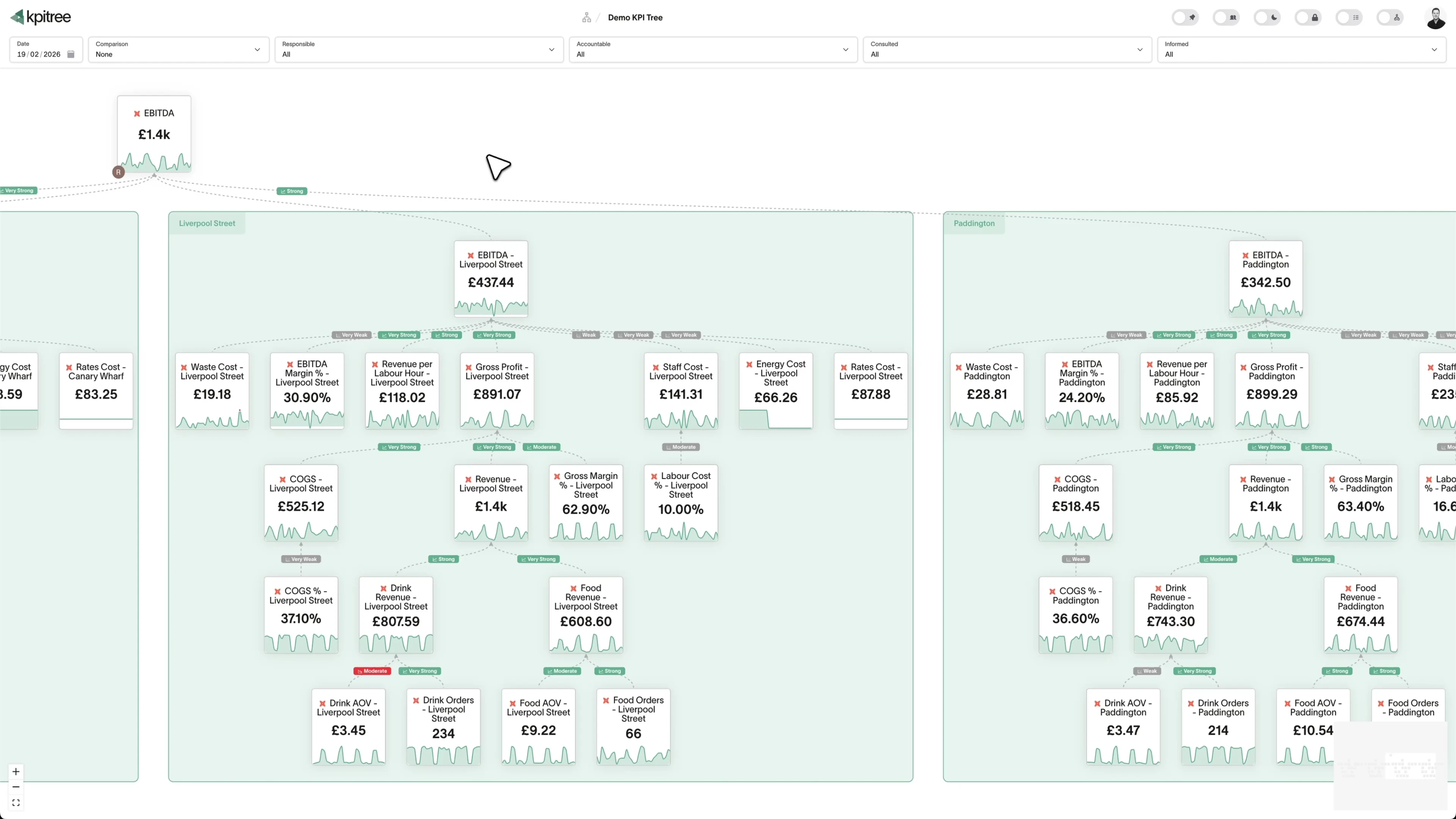

GitHub captures everything your engineering team does, pull requests, code reviews, CI/CD runs, deployments, incident responses. But that data sits in API logs and third-party dashboards, disconnected from the business outcomes it drives. KPI Tree consumes GitHub data through GitHub's own official MCP server (the reference implementation maintained by GitHub at github/github-mcp-server, one of the most mature vendor-shipped MCP servers in production), through your warehouse where GitHub data already lands via Fivetran or Airbyte, or through a professional services engagement that builds the pipeline for you. Once connected, KPI Tree maps it to metrics like cycle time, deployment frequency, and review throughput, then structures those metrics into causal trees that show exactly how engineering work drives product and revenue outcomes. DORA metrics stop being a reporting exercise and start being an accountability system.

From GitHub events to engineering performance trees

KPI Tree consumes GitHub through the official GitHub MCP server, your warehouse where GitHub data already lands, or a professional services engagement that builds the pipeline for you.

Connect your GitHub data

Three ways to get started, depending on your stack.

Pull metrics from GitHub directly through the Model Context Protocol.

Connect your existing warehouse where GitHub data already lands.

Our professional services team can build you turn-key AI foundations in a matter of weeks. Data warehouse on Snowflake/BigQuery, ELT with Fivetran, all modelled in dbt with a semantic layer.

Map GitHub metrics from your warehouse tables

Define metrics from your GitHub data using SQL or the metric builder: PR cycle time, deployment frequency, review turnaround, merge rates, CI pass rates, and more. Each metric gets a time grain, dimensions (repo, team, author), and a refresh schedule.

Build trees and assign ownership

Arrange GitHub metrics into causal trees - deployment frequency drives release velocity, which drives feature throughput. Assign RACI owners to every metric. When cycle time spikes, the right person is alerted with statistical context and a clear path to root cause.

Engineering metrics that connect to business outcomes

KPI Tree takes the GitHub data already in your warehouse and adds the structure, ownership, and causal analysis that turns engineering activity into organisational accountability.

DORA metrics as a causal tree, not a dashboard

Deployment frequency, lead time for changes, change failure rate, and time to restore service - arranged in a tree that shows how they drive each other and connect to product and revenue metrics above them. When one degrades, trace the cause through the tree instead of guessing.

Statistical correlations between engineering and product metrics

KPI Tree runs Pearson correlations and Granger causality tests between your GitHub metrics and product KPIs. Discover whether faster PR reviews actually correlate with lower defect rates, or whether deployment frequency drives feature adoption - with statistical confidence, not intuition.

Ownership at the metric level, not the repo level

RACI ownership on every metric means the right engineering lead owns cycle time for their team, the platform lead owns CI reliability, and the VP Engineering owns aggregate velocity. Push notifications via Slack, email, or SMS alert owners when their metrics move outside expected bounds.

DORA metrics that actually drive improvement.

Most teams track DORA metrics in a dashboard that gets reviewed monthly and forgotten weekly. KPI Tree structures them as a causal tree: deployment frequency feeds into lead time for changes, which connects to change failure rate and time to restore. Each metric has an owner, a target, and automated alerts when it moves outside statistical norms. The result is not a prettier dashboard - it is a system where degradation in any DORA metric triggers an owned response before it compounds.

- Deployment frequency, lead time, change failure rate, and MTTR as tree nodes

- Causal relationships show how DORA metrics drive each other

- Statistical outlier detection alerts owners before trends become crises

- Dimension breakdowns by team, repo, or service for targeted improvement

Code review health visible without chasing Slack threads.

Review turnaround time, review depth, approval rates, and comment density - all tracked as metrics with ownership. When review bottlenecks emerge, the correlation engine shows whether they are causing downstream cycle time increases. Team leads see their review metrics alongside deployment metrics in a single tree, making trade-offs visible instead of buried in GitHub notification noise.

- Review turnaround time, approval rate, and comment density as owned metrics

- Correlation analysis links review health to cycle time and defect rates

- Dimension breakdowns by reviewer, team, and repository

- Automated alerts when review queues exceed statistical thresholds

CI/CD pipeline performance tied to delivery outcomes.

GitHub Actions workflow duration, pass rates, flaky test frequency, and build queue time - tracked as metrics that feed into your delivery tree. When CI reliability drops, the tree shows the downstream impact on deployment frequency and release cadence. Platform teams own CI metrics, product teams own delivery metrics, and the causal tree makes the dependency explicit.

- Workflow duration, pass rate, and flaky test frequency as owned metrics

- Causal trees link CI health to deployment frequency and release velocity

- Platform and product teams see shared accountability in a single view

- Historical trend analysis shows whether CI investments are paying off

Engineering investment aligned to business value.

GitHub activity metrics - commits, PRs, deployments - connect upward to product metrics like feature adoption, time to value, and customer satisfaction. Executive stakeholders see how engineering throughput drives business outcomes without needing to understand Git. Engineering leaders use the same tree to justify headcount, prioritise platform work, and demonstrate that velocity improvements translate to revenue impact.

- Engineering metrics connect upward to product and revenue KPIs in a single tree

- RACI ownership spans engineering, product, and executive stakeholders

- Quarterly business reviews use the same tree leadership sees daily

- MCP server and REST API expose engineering metrics to AI assistants and internal tools

How KPI Tree uses GitHub data differently

Engineering analytics tools visualise GitHub activity. KPI Tree connects that activity to business outcomes through causal structure, statistical analysis, and human ownership.

Causal trees, not activity dashboards

Other tools show GitHub metrics in charts and tables. KPI Tree arranges them in causal trees that model how engineering work drives business outcomes - making trade-offs visible and accountability explicit.

Statistical proof that engineering work matters

Pearson correlations and Granger causality tests between engineering and business metrics replace gut-feel arguments with statistical evidence. Prove that faster reviews lead to fewer defects, or that CI investments drive deployment frequency.

Ownership that spans the org chart

Engineering leads own their team metrics, platform teams own CI reliability, and executives own aggregate velocity - all in a single tree. When something moves, the right person is already accountable.

Metrics you can track

26 GitHub metrics ready to add to your metric trees.

Branch Lifecycle Analysis

Branch Lifecycle Analysis

EngineeringMetric Definition

Branch Lifecycle Analysis measures the duration from branch creation to merge or deletion across a repository. It surfaces stale or abandoned branches that inflate cognitive overhead and merge-conflict risk. Tracking this metric helps teams enforce hygiene policies and maintain a clean codebase.

Bug Fix Rate

Bug Fix Rate

EngineeringMetric Definition

Bug Fix Rate = Bugs Closed in Period / Total Open Bugs at Start of Period × 100

Bug Fix Rate measures the proportion of bug-labelled issues closed within a given period relative to the total number of open bugs. It reflects a team's capacity and prioritisation of quality work. A consistently low rate may signal under-investment in reliability.

Code Churn Rate

Code Churn Rate

EngineeringMetric Definition

Code Churn Rate = Lines Re-changed Within N Days / Total Lines Changed × 100

Code Churn Rate quantifies the percentage of lines changed within a short window after their initial commit. High churn often indicates unclear requirements, premature coding, or inadequate design reviews. It is a proxy for wasted engineering effort.

Code Coverage Trend

Code Coverage Trend

EngineeringMetric Definition

Code Coverage = Lines Covered by Tests / Total Lines of Code × 100

Code Coverage Trend tracks the percentage of code exercised by automated tests over time, measured per commit or release. It highlights whether new code is being adequately tested and whether coverage is improving or regressing. Sustained downward trends signal growing risk.

Code Quality Trend Analysis

Code Quality Trend Analysis

EngineeringMetric Definition

Code Quality Trend Analysis aggregates signals such as linting violations, cyclomatic complexity, code duplication, and static-analysis findings over time. It provides a longitudinal view of code health across repositories. Consistent improvement indicates maturing engineering practices.

Code Review Quality Score

Code Review Quality Score

EngineeringMetric Definition

Code Review Quality Score evaluates the substantiveness of pull request reviews by weighting factors such as comment depth, suggestions made, files reviewed versus files changed, and time spent. It distinguishes meaningful reviews from rubber-stamp approvals. Higher scores correlate with fewer post-merge defects.

Code Review Velocity

Code Review Velocity

EngineeringMetric Definition

Code Review Velocity = Median(First Review Timestamp − PR Ready Timestamp)

Code Review Velocity measures the elapsed time from when a pull request is opened or marked ready for review to when the first substantive review is submitted. It is a key driver of lead time for changes. Long review waits are one of the most common causes of developer context-switching.

Commit Frequency

Commit Frequency

EngineeringMetric Definition

Commit Frequency = Total Commits / Time Period

Commit Frequency measures the number of commits pushed to a repository or across an organisation within a given time period. It serves as a high-level activity indicator and a proxy for continuous integration discipline. Consistently low frequency may indicate large, risky batch commits.

Deployment Frequency

Deployment Frequency

EngineeringMetric Definition

Deployment Frequency = Number of Deployments / Time Period

Deployment Frequency measures how often an organisation successfully releases to production. It is one of the four DORA metrics and a key indicator of delivery maturity. Elite teams deploy on demand, multiple times per day, while low performers deploy monthly or less frequently.

Developer Contribution Patterns

Developer Contribution Patterns

EngineeringMetric Definition

Developer Contribution Patterns analyses how commits, reviews, and issue activity are distributed across team members over time. It highlights knowledge concentration, identifies potential bus-factor risks, and reveals whether workload distribution is healthy. Balanced contributions indicate resilient teams.

Developer Productivity Score

Developer Productivity Score

EngineeringMetric Definition

Developer Productivity Score is a composite metric that blends output indicators (commits, PRs merged), quality signals (review depth, test coverage), and collaboration measures (reviews given, discussions participated in). It provides a balanced view of developer effectiveness that avoids over-indexing on raw output.

DevOps Pipeline Efficiency

DevOps Pipeline Efficiency

EngineeringMetric Definition

Pipeline Efficiency = Successful Pipeline Runs / Total Pipeline Runs × 100

DevOps Pipeline Efficiency measures the speed, reliability, and resource utilisation of CI/CD pipelines. It encompasses build duration, test execution time, pipeline success rate, and queue wait time. Efficient pipelines accelerate feedback loops and reduce developer idle time.

Discussion Engagement Rate

Discussion Engagement Rate

EngineeringMetric Definition

Discussion Engagement Rate = Discussions with Responses / Total Discussions × 100

Discussion Engagement Rate measures the proportion of GitHub Discussions that receive replies, upvotes, or marked answers within a defined period. It reflects community health and the effectiveness of asynchronous knowledge-sharing. Low engagement may indicate poor discoverability or cultural barriers to participation.

Feature Development Cycle Time

Feature Development Cycle Time

EngineeringMetric Definition

Cycle Time = Deployment Timestamp − First Feature Commit Timestamp

Feature Development Cycle Time measures the elapsed time from the first commit on a feature branch to successful deployment to production. It encompasses coding, review, testing, and release phases. Shorter cycle times enable faster user feedback and more responsive product development.

Issue Resolution Time

Issue Resolution Time

EngineeringMetric Definition

Issue Resolution Time = Issue Closed Timestamp − Issue Created Timestamp

Issue Resolution Time measures the elapsed time from when a GitHub issue is opened to when it is closed. It reflects team responsiveness, prioritisation effectiveness, and overall execution speed. Segmenting by label (bug, feature, chore) provides more actionable insights.

Lead Time for Changes

Lead Time for Changes

EngineeringMetric Definition

Lead Time = Production Deployment Timestamp − Commit Timestamp

Lead Time for Changes measures the elapsed time from when a code change is committed to when it is successfully running in production. It is one of the four DORA metrics and a key indicator of delivery pipeline efficiency. Elite performers achieve lead times measured in hours rather than days or weeks.

Open Source Contribution Analysis

Open Source Contribution Analysis

EngineeringMetric Definition

Open Source Contribution Analysis tracks the volume, diversity, and quality of contributions to public repositories, including pull requests, issues, reviews, and discussions from external contributors. It measures community health and the effectiveness of open-source engagement strategies.

Pull Request Approval Rate

Pull Request Approval Rate

EngineeringMetric Definition

PR Approval Rate = PRs Approved on First Review / Total PRs Reviewed × 100

Pull Request Approval Rate measures the percentage of pull requests that are approved without requiring changes on their first review cycle. A high rate indicates well-aligned coding standards, effective planning, and good communication between authors and reviewers.

Pull Request Bottleneck Analysis

Pull Request Bottleneck Analysis

EngineeringMetric Definition

Pull Request Bottleneck Analysis examines the stages of the PR lifecycle - authoring, review wait, review-in-progress, CI execution, and merge - to identify where delays accumulate. It transforms aggregate cycle time into an actionable breakdown that pinpoints specific process failures.

Release Velocity

Release Velocity

EngineeringMetric Definition

Release Velocity = Number of Releases / Time Period

Release Velocity measures the frequency and speed at which new versions are tagged and published via GitHub Releases or deployment workflows. It encompasses the cadence of releases, the volume of changes per release, and the time between successive releases. Healthy velocity balances speed with stability.

Repository Health Score

Repository Health Score

EngineeringMetric Definition

Repository Health Score is a composite metric that evaluates key health indicators for a GitHub repository, including documentation completeness, test coverage, CI configuration, dependency freshness, branch protection rules, and recent maintenance activity. It provides a single number for comparing repository maturity across an organisation.

Security Alert Resolution Time

Security Alert Resolution Time

EngineeringMetric Definition

Resolution Time = Alert Resolved Timestamp − Alert Created Timestamp

Security Alert Resolution Time measures the elapsed time from when a security alert (Dependabot, code scanning, or secret scanning) is opened to when it is resolved or dismissed in GitHub. It quantifies the organisation's responsiveness to known vulnerabilities and the effectiveness of its security remediation process.

Security Vulnerability Trends

Security Vulnerability Trends

EngineeringMetric Definition

Security Vulnerability Trends tracks the number, severity, and type of security vulnerabilities discovered across repositories over time. It encompasses Dependabot alerts, code scanning findings, and secret scanning detections. Improving trends indicate maturing security practices and proactive dependency management.

Sprint Velocity Tracking

Sprint Velocity Tracking

EngineeringMetric Definition

Sprint Velocity = Sum of Story Points (or Issue Count) Completed in Sprint

Sprint Velocity Tracking measures the amount of work - typically in story points or issue count - completed during each sprint or iteration, as tracked through GitHub Projects. It provides a baseline for capacity planning and helps teams set realistic commitments for upcoming sprints.

Team Collaboration Index

Team Collaboration Index

EngineeringMetric Definition

Team Collaboration Index quantifies the degree of cross-functional and cross-team interaction on GitHub, including cross-team code reviews, co-authored commits, discussion participation, and issue triage across repository boundaries. It measures whether knowledge and responsibility are shared or siloed.

Technical Debt Accumulation

Technical Debt Accumulation

EngineeringMetric Definition

Technical Debt Accumulation measures the rate at which technical debt grows across a codebase, using proxies such as TODO/FIXME comment count, aged open issues labelled as tech-debt, increasing cyclomatic complexity, and dependency staleness. Rising accumulation signals that short-term trade-offs are compounding into long-term burden.

Related integrations

Other data sources that work with KPI Tree.

Common questions

- Through GitHub's own official MCP server at github/github-mcp-server. It is the reference implementation GitHub maintains, covering repositories, pull requests, issues, Actions, and code security. KPI Tree queries it over OAuth for the remote variant or a personal access token for the local one. If you have already replicated GitHub events to Snowflake, BigQuery, or another warehouse via Fivetran, Airbyte, or a custom GraphQL job, KPI Tree reads those tables instead so DORA breakdowns run against your governed copy. Teams without a warehouse engage our professional services team, which stands up the pipeline and ships a dbt semantic layer for engineering performance.

- Any metric you can derive from your GitHub warehouse tables: PR cycle time, deployment frequency, review turnaround, merge rates, CI pass rates, workflow duration, commit frequency, change failure rate, time to restore, and more. Metrics are defined with SQL or the metric builder and support dimensions like team, repo, and author.

- KPI Tree solves a different problem. Engineering analytics tools visualise GitHub activity in isolation. KPI Tree connects engineering metrics to product and revenue outcomes in a causal tree with ownership. Many teams use both - an engineering analytics tool for developer-level detail, and KPI Tree for cross-functional accountability.

- The MCP connection takes minutes - no warehouse required. If your GitHub data is already in a warehouse, connecting KPI Tree takes under an hour. If you need AI foundations built from scratch, our professional services team handles the setup end to end. Most teams have a working DORA metric tree within a single session.

- Yes. Deployment frequency, lead time for changes, change failure rate, and time to restore service can all be defined as metrics from your GitHub warehouse tables. KPI Tree adds what DORA dashboards lack: causal relationships between the four metrics, ownership at each level, and statistical alerts when any metric degrades.

- No. Whether you connect via MCP or a data warehouse, KPI Tree reads aggregate metrics - not source code, file contents, or commit diffs. It works with metadata like PR counts, timestamps, and workflow statuses, not code.

- Yes. Metrics support dimensions like team, repository, author, and workflow name. You can create dimension breakdowns that auto-generate child metrics - "Cycle Time (Team: Platform)", "Cycle Time (Team: Product)" - each with its own owner and alerts.

- Yes. The MCP connection works with any GitHub plan, including Enterprise. If you use the data warehouse route, KPI Tree reads from your warehouse tables regardless of the GitHub plan or hosting model. Our professional services team can also set up pipelines for Enterprise environments.

Related guides

Deep dives into the frameworks and metrics that work with GitHub.

Your GitHub data already tells the story. Make sure your team reads it.

Connect your warehouse to KPI Tree and turn GitHub activity into an engineering performance system with causal metric trees, RACI ownership, and statistical analysis that ties engineering work to business outcomes.